Recursive Feature Elimination (RFE) | Vibepedia

Recursive Feature Elimination (RFE) is a model-agnostic technique for feature selection. It works by iteratively removing the least important features, based…

Contents

- 🎯 What is Recursive Feature Elimination (RFE)?

- 🛠️ How RFE Actually Works: The Engine Room

- 📈 Who Benefits Most from RFE?

- ⚖️ RFE vs. Other Feature Selection Methods

- 💡 Practical Tips for Using RFE Effectively

- ⚠️ Potential Pitfalls and How to Avoid Them

- 🚀 The Future of RFE and Feature Selection

- 📚 Further Reading and Resources

- Frequently Asked Questions

- Related Topics

Overview

Recursive Feature Elimination (RFE), a technique born from the fertile grounds of machine learning and data science, is essentially a feature selection algorithm. Its primary goal is to pare down a dataset's features (columns) to the most relevant ones for building a predictive model. Think of it as a meticulous editor, cutting away extraneous words to leave only the most impactful sentences. Developed by Guyon et al. in the early 2000s, RFE has become a go-to method for tackling high-dimensional data where the number of features threatens to overwhelm the learning process. It's particularly useful when you suspect many of your input variables are redundant or irrelevant, acting as noise that can degrade model performance and increase computational cost.

🛠️ How RFE Actually Works: The Engine Room

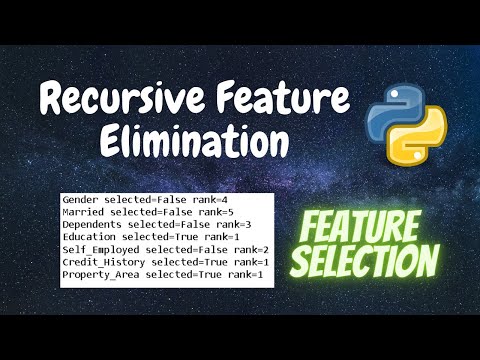

The mechanics of RFE are elegantly iterative. It begins by training a model (like a Support Vector Machine or Logistic Regression) on the full set of features. Then, it ranks these features based on their importance, often using coefficients or feature importances provided by the model. The least important feature(s) are then eliminated. This process repeats: retrain the model on the reduced feature set, re-rank, and eliminate again. This continues until a desired number of features is reached or until a predefined stopping criterion is met. The 'recursive' nature is key – it’s a systematic, step-by-step pruning that aims to uncover the optimal subset of features. This iterative refinement is what distinguishes it from simpler, one-off selection methods.

📈 Who Benefits Most from RFE?

RFE is a powerful ally for data scientists and machine learning engineers grappling with datasets that are, to put it mildly, bloated. If you're working with genomic data, text analysis where each word is a feature, or any domain with thousands or millions of potential predictors, RFE can be a lifesaver. It's especially beneficial when building models that are sensitive to feature dimensionality, such as linear models or tree-based models that can suffer from overfitting. Researchers and practitioners aiming to improve model interpretability, reduce training time, and enhance predictive accuracy by removing noise will find RFE an indispensable tool in their arsenal. It's about making your models smarter, not just bigger.

⚖️ RFE vs. Other Feature Selection Methods

Compared to other feature selection techniques, RFE offers a distinct advantage: it considers the interactions between features implicitly through the model it uses for ranking. Filter methods, for instance, assess features independently based on statistical properties (like correlation with the target variable) before model training. Wrapper methods like RFE use the model's performance as the criterion for feature selection, but RFE's recursive elimination is more targeted than brute-force exhaustive search methods. While Lasso regularization can also perform feature selection by shrinking coefficients to zero, RFE provides a more explicit, step-wise reduction that can be easier to interpret and control. Each method has its place, but RFE's iterative pruning is a robust approach for many complex scenarios.

💡 Practical Tips for Using RFE Effectively

When implementing RFE, it's crucial to use a model that provides a clear measure of feature importance. Scikit-learn's RFE implementation in Python is a popular choice, allowing you to specify the estimator and the number of features to select. Always perform RFE within a cross-validation framework to ensure the selected features generalize well to unseen data. Avoid selecting too few features, which can lead to underfitting, or too many, which might not offer significant benefits over the original set. Experiment with different estimators (e.g., Random Forest, Gradient Boosting) as the 'best' feature subset can depend on the underlying model.

⚠️ Potential Pitfalls and How to Avoid Them

One common pitfall with RFE is the risk of overfitting to the specific training data during the selection process itself. If RFE is performed only once on the entire training set, the selected features might not be optimal for new, unseen data. This is why cross-validation is non-negotiable. Another issue arises if the chosen estimator's feature importance metric is unreliable or biased. For example, highly correlated features might receive similar importance scores, leading to arbitrary elimination. Be mindful of the computational cost; RFE can be slow on very large datasets due to repeated model training. Finally, don't blindly trust the 'optimal' subset; always validate the final model's performance with the selected features.

🚀 The Future of RFE and Feature Selection

The landscape of feature selection is constantly evolving, and RFE, while a mature technique, is not static. Future developments might involve integrating RFE with more sophisticated deep learning architectures or exploring adaptive elimination strategies that adjust based on model performance during the recursion. There's also ongoing research into making RFE more computationally efficient, perhaps through parallelization or approximation techniques. As datasets continue to grow in size and complexity, the demand for effective, automated feature selection methods like RFE will only increase. The challenge lies in balancing predictive power with interpretability and computational feasibility, a tightrope RFE is well-equipped to walk.

📚 Further Reading and Resources

For those looking to implement RFE or understand its theoretical underpinnings, several resources are invaluable. The original paper by Guyon, Weston, Barnhill, and Vapnik, "Gene selection for cancer classification using support vector machines" (2002), is a foundational text. For practical Python implementation, the Scikit-learn documentation on feature selection is excellent. Online courses on machine learning algorithms often cover RFE in detail. Books like "Introduction to Statistical Learning" by James, Witten, Hastie, and Tibshirani provide broader context on feature selection within statistical modeling. Exploring Kaggle competitions also offers real-world examples of RFE in action.

Key Facts

- Year

- 2002

- Origin

- Guyon, I., Weston, J., Barnhill, S., & Vapnik, V. (2002). Gene selection for cancer classification using support vector machines. *Machine learning*, *46*(1-3), 389-422.

- Category

- Machine Learning / Data Science

- Type

- Algorithm/Technique

Frequently Asked Questions

What is the main goal of Recursive Feature Elimination (RFE)?

The primary goal of RFE is to systematically reduce the number of features in a dataset to identify the most relevant subset for building a predictive model. This helps improve model performance, reduce training time, and enhance interpretability by removing redundant or irrelevant features.

What types of models can be used with RFE?

RFE can be used with any model that provides a measure of feature importance, such as coefficients in linear models (like Logistic Regression) or feature importance scores in tree-based models (like Random Forest). Support Vector Machines are also commonly used.

How does RFE differ from other feature selection methods?

Unlike filter methods that assess features independently, RFE uses a model's performance as the criterion for selection and does so iteratively. It's more targeted than brute-force search and provides a more explicit feature subset than regularization techniques like Lasso, though it can be more computationally intensive.

Is RFE suitable for all types of data?

RFE is particularly effective for datasets with a large number of features (high dimensionality), where feature redundancy or irrelevance is suspected. It's less critical for datasets with a small, inherently informative feature set, where simpler methods might suffice.

What are the risks of using RFE?

The main risks include overfitting the feature selection process to the training data, leading to poor generalization. There's also a risk of arbitrary feature elimination if feature importance scores are not reliable, and significant computational cost for large datasets due to repeated model training.

How can I implement RFE in Python?

The most common way to implement RFE in Python is by using the Scikit-learn library. The sklearn.feature_selection.RFE class allows you to specify your chosen estimator and the desired number of features to select.