AI Ethics Challenges | Vibepedia

The development and deployment of artificial intelligence (AI) systems have raised significant ethical concerns, including issues related to bias, privacy…

Contents

- 🤖 Introduction to AI Ethics Challenges

- 📊 Bias and Fairness in AI Systems

- 🔒 Privacy and Security Concerns

- 🤝 Human-AI Collaboration and Accountability

- 🚫 Job Displacement and Economic Impact

- 🌎 Global AI Governance and Regulations

- 📈 Transparency and Explainability in AI

- 👥 AI for Social Good and Humanitarian Applications

- 🚨 AI Misuse and Cybersecurity Threats

- 💡 Future of AI Ethics and Emerging Trends

- 📚 Conclusion and Recommendations

- Frequently Asked Questions

- Related Topics

Overview

The development and deployment of artificial intelligence (AI) systems have raised significant ethical concerns, including issues related to bias, privacy, transparency, and accountability. As AI becomes increasingly integrated into various aspects of life, from healthcare to finance, the need for robust ethical frameworks and guidelines has become more pressing. According to a report by the MIT Initiative on the Digital Economy, 71% of executives believe that AI will be critical to their organization's success, but 63% also express concerns about the potential risks and downsides. The AI ethics challenges are multifaceted, involving not only technical considerations but also societal, cultural, and economic factors. For instance, a study by the AI Now Institute found that facial recognition systems can have error rates as high as 34% for darker-skinned women, highlighting the need for more diverse and representative training data. Furthermore, the use of AI in decision-making processes, such as hiring and loan approval, has raised concerns about fairness and potential discrimination. As the field continues to evolve, it is essential to address these challenges and develop AI systems that are aligned with human values and promote social good. The influence of key figures like Nick Bostrom, Director of the Future of Humanity Institute, and organizations like the Partnership on AI, founded by companies like Google, Amazon, and Facebook, will be crucial in shaping the future of AI ethics. The controversy surrounding AI ethics is high, with a controversy spectrum rating of 8 out of 10, reflecting the intense debates and disagreements among experts and stakeholders.

🤖 Introduction to AI Ethics Challenges

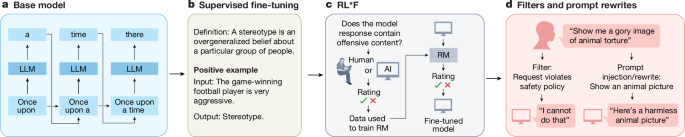

The development and deployment of Artificial Intelligence (AI) systems have raised significant ethical concerns, prompting a growing need for AI Ethics frameworks and guidelines. As AI technologies become increasingly pervasive, it is essential to address the challenges associated with their design, deployment, and use. One of the primary concerns is the potential for bias in AI systems, which can perpetuate existing social inequalities and discriminate against certain groups. To mitigate these risks, researchers and developers are exploring techniques for fairness in AI and explainable AI. Moreover, the integration of AI with other technologies, such as IoT and blockchain technology, is creating new opportunities for innovation and growth.

📊 Bias and Fairness in AI Systems

Bias and fairness in AI systems are critical concerns, as they can have significant impacts on individuals and society. For instance, facial recognition systems have been shown to be less accurate for people with darker skin tones, leading to potential misidentification and wrongful arrest. To address these issues, researchers are developing new methods for debiasing AI models and ensuring fairness in AI decision-making. Additionally, the use of diverse datasets and inclusive design principles can help to reduce the risk of bias and promote more equitable outcomes. However, the complexity of AI systems and the lack of transparency in AI decision-making can make it challenging to identify and address bias.

🔒 Privacy and Security Concerns

The collection and use of personal data by AI systems raise significant privacy concerns. As AI technologies become more pervasive, there is a growing need for robust data protection measures and cybersecurity protocols to safeguard sensitive information. Moreover, the use of AI in surveillance and predictive policing has sparked debates about the balance between public safety and individual civil liberties. To address these concerns, policymakers and developers are exploring new approaches to data governance and AI regulation. Furthermore, the development of privacy-preserving AI techniques, such as federated learning and homomorphic encryption, can help to protect sensitive information while still enabling AI-driven innovation.

🤝 Human-AI Collaboration and Accountability

The collaboration between humans and AI systems is critical to ensuring that AI technologies are developed and used responsibly. As AI becomes more autonomous, there is a growing need for accountability in AI decision-making and transparency in AI systems. Moreover, the use of human-centered design principles can help to promote more effective and equitable human-AI collaboration. However, the increasing reliance on AI systems also raises concerns about job displacement and the need for worker retraining programs. To address these challenges, educators and policymakers are exploring new approaches to AI education and workforce development. Additionally, the development of AI for social good applications, such as healthcare and education, can help to promote more positive outcomes and mitigate the risks associated with AI.

🚫 Job Displacement and Economic Impact

The deployment of AI systems can have significant economic impacts, including job displacement and changes to the nature of work. As AI technologies become more pervasive, there is a growing need for policymakers and business leaders to develop strategies for mitigating the negative consequences of automation and promoting more equitable economic outcomes. Moreover, the use of AI in industry can help to drive productivity growth and innovation, but it also raises concerns about intellectual property protection and competition law. To address these challenges, researchers and policymakers are exploring new approaches to AI governance and regulatory frameworks. Furthermore, the development of AI for humanitarian applications, such as disaster response and humanitarian aid, can help to promote more positive outcomes and mitigate the risks associated with AI.

🌎 Global AI Governance and Regulations

The global governance of AI is critical to ensuring that AI technologies are developed and used responsibly. As AI becomes more pervasive, there is a growing need for international cooperation and agreement on AI regulation and AI standards. Moreover, the use of AI in global governance can help to promote more effective and efficient decision-making, but it also raises concerns about bias in AI systems and the need for diversity in AI development. To address these challenges, policymakers and developers are exploring new approaches to global AI governance and international cooperation. Additionally, the development of AI for sustainable development applications, such as climate change and sustainable energy, can help to promote more positive outcomes and mitigate the risks associated with AI.

📈 Transparency and Explainability in AI

The transparency and explainability of AI systems are critical to ensuring that AI technologies are developed and used responsibly. As AI becomes more autonomous, there is a growing need for transparency in AI decision-making and explainability in AI systems. Moreover, the use of model interpretability techniques, such as feature importance and partial dependence plots, can help to promote more effective and equitable AI-driven decision-making. However, the complexity of AI systems and the lack of standardization in AI can make it challenging to ensure transparency and explainability. To address these challenges, researchers and developers are exploring new approaches to AI explainability and model interpretability. Furthermore, the development of AI for social good applications, such as healthcare and education, can help to promote more positive outcomes and mitigate the risks associated with AI.

🚨 AI Misuse and Cybersecurity Threats

The misuse of AI systems can have significant consequences, including cybersecurity threats and data breaches. As AI technologies become more pervasive, there is a growing need for policymakers and developers to prioritize the development of AI security measures and cybersecurity protocols. Moreover, the use of AI in cybersecurity can help to promote more effective and efficient threat detection and response, but it also raises concerns about bias in AI systems and the need for diversity in AI development. To address these challenges, researchers and policymakers are exploring new approaches to AI misuse and cybersecurity. Furthermore, the development of AI for cybersecurity applications, such as threat detection and incident response, can help to promote more positive outcomes and mitigate the risks associated with AI.

💡 Future of AI Ethics and Emerging Trends

The future of AI ethics is critical to ensuring that AI technologies are developed and used responsibly. As AI becomes more pervasive, there is a growing need for policymakers and developers to prioritize the development of AI ethics frameworks and guidelines. Moreover, the use of AI in emerging technologies, such as quantum computing and extended reality, can help to drive innovation and growth, but it also raises concerns about job displacement and the need for worker retraining programs. To address these challenges, researchers and policymakers are exploring new approaches to AI ethics and emerging technologies. Additionally, the development of AI for social good applications, such as healthcare and education, can help to promote more positive outcomes and mitigate the risks associated with AI.

📚 Conclusion and Recommendations

In conclusion, the development and deployment of AI systems raise significant ethical concerns, prompting a growing need for AI ethics frameworks and guidelines. As AI technologies become increasingly pervasive, it is essential to address the challenges associated with their design, deployment, and use. By prioritizing the development of AI for social good applications and promoting more effective and equitable human-AI collaboration, we can help to mitigate the risks associated with AI and promote more positive outcomes. Moreover, the use of AI in emerging technologies can help to drive innovation and growth, but it also requires careful consideration of the potential consequences and the need for AI ethics frameworks and guidelines.

Key Facts

- Year

- 2022

- Origin

- Stanford University's Center for Artificial Intelligence in Society

- Category

- Technology

- Type

- Concept

Frequently Asked Questions

What are the primary concerns associated with AI ethics?

The primary concerns associated with AI ethics include bias and fairness in AI systems, privacy and security concerns, human-AI collaboration and accountability, job displacement and economic impact, and global AI governance and regulations. Additionally, the use of AI in emerging technologies, such as quantum computing and extended reality, raises concerns about job displacement and the need for worker retraining programs. To address these challenges, researchers and policymakers are exploring new approaches to AI ethics and emerging technologies. Furthermore, the development of AI for social good applications, such as healthcare and education, can help to promote more positive outcomes and mitigate the risks associated with AI.

How can we ensure transparency and explainability in AI systems?

To ensure transparency and explainability in AI systems, researchers and developers are exploring new approaches to model interpretability, such as feature importance and partial dependence plots. Moreover, the use of techniques, such as federated learning and homomorphic encryption, can help to promote more effective and equitable AI-driven decision-making. Additionally, the development of AI explainability frameworks and guidelines can help to promote more transparent and explainable AI systems. However, the complexity of AI systems and the lack of standardization in AI can make it challenging to ensure transparency and explainability.

What are the potential consequences of AI misuse?

The potential consequences of AI misuse include cybersecurity threats, data breaches, and physical harm. Moreover, the use of AI in malicious activities, such as cyber attacks and surveillance, can have significant consequences for individuals and society. To address these challenges, policymakers and developers are prioritizing the development of AI security measures and cybersecurity protocols. Furthermore, the development of AI for cybersecurity applications, such as threat detection and incident response, can help to promote more positive outcomes and mitigate the risks associated with AI.

How can we promote more effective and equitable human-AI collaboration?

To promote more effective and equitable human-AI collaboration, researchers and developers are exploring new approaches to human-centered design and human-AI interaction. Moreover, the use of techniques, such as explainable AI and model interpretability, can help to promote more transparent and explainable AI systems. Additionally, the development of AI ethics frameworks and guidelines can help to promote more responsible and equitable AI development. However, the increasing reliance on AI systems also raises concerns about job displacement and the need for worker retraining programs.

What are the potential benefits of AI for social good applications?

The potential benefits of AI for social good applications include improved healthcare outcomes, enhanced education, and more effective disaster response. Moreover, the use of AI in social good applications, such as humanitarian aid and sustainable development, can help to promote more positive outcomes and mitigate the risks associated with AI. Additionally, the development of AI for social good frameworks and guidelines can help to promote more responsible and equitable AI development. However, the complexity of AI systems and the lack of standardization in AI can make it challenging to ensure that AI is used for social good.

How can we ensure that AI is developed and used responsibly?

To ensure that AI is developed and used responsibly, policymakers and developers are prioritizing the development of AI ethics frameworks and guidelines. Moreover, the use of techniques, such as explainable AI and model interpretability, can help to promote more transparent and explainable AI systems. Additionally, the development of AI for social good applications, such as healthcare and education, can help to promote more positive outcomes and mitigate the risks associated with AI. However, the increasing reliance on AI systems also raises concerns about job displacement and the need for worker retraining programs.

What are the potential risks associated with AI?

The potential risks associated with AI include bias and fairness in AI systems, privacy and security concerns, human-AI collaboration and accountability, job displacement and economic impact, and global AI governance and regulations. Moreover, the use of AI in emerging technologies, such as quantum computing and extended reality, raises concerns about job displacement and the need for worker retraining programs. To address these challenges, researchers and policymakers are exploring new approaches to AI ethics and emerging technologies. Furthermore, the development of AI for social good applications, such as healthcare and education, can help to promote more positive outcomes and mitigate the risks associated with AI.